Introduction

We’ve all been there. You’re deep into an RPG, the music is swelling, the stakes are high, and you walk up to a town guard to ask for directions. He looks you dead in the eye and says, for the four-hundredth time, “Patrolling the Mojave almost makes you wish for a nuclear winter.”

For decades, this has been the ceiling of immersion. As a gamer and someone who has poked around the backend of narrative design for years, I’ve always viewed Non Player Characters (NPCs) as glorified vending machines. You put a coin in (interaction), and you get a pre-packaged snack (scripted dialogue) out. But the vending machine is breaking.

We are currently witnessing the most significant shift in game development since the transition from 2D to 3D. The integration of Generative AI and Large Language Models (LLMs) into game engines is fundamentally changing how characters think, speak, and react. It’s messy, it’s controversial, and quite frankly, it’s thrilling.

Here is a look at what is actually happening under the hood of AI controlled characters, stripped of the marketing hype and grounded in the reality of game development.

From Finite States to Infinite Possibilities

To understand where we are going, we have to look at what we are leaving behind. Traditionally, game AI hasn’t really been “intelligence” in the cognitive sense. It was a series of logic gates known as Finite State Machines (FSMs) or Behavior Trees.

Think of the guards in Metal Gear Solid. They have states: Idle, Suspicious, Alert. If they hear a noise, the code switches them from Idle to Suspicious. If they see the player, they switch to Alert. It’s effective, but it’s rigid. It is a puppet show where the developer is pulling every single string.

The new wave of AI characters often powered by tech stacks like Inworld AI or integrated OpenAI APIs cuts the strings. Instead of writing a script, developers are now writing “character bibles.” You give the AI a personality, a backstory, a motivation, and knowledge of the world, and then you let it improvise.

I recently tested a modded version of The Elder Scrolls V: Skyrim integrated with an LLM. I walked up to an innkeeper a character who usually has three lines of dialogue and asked him about the political tension in the city. He didn’t give me a canned response. He sighed, wiped the counter (a triggered animation), and ranted about how the tax hikes were killing his business, referencing specific in game events dynamically. It felt less like playing a game and more like stumbling into a living conversation.

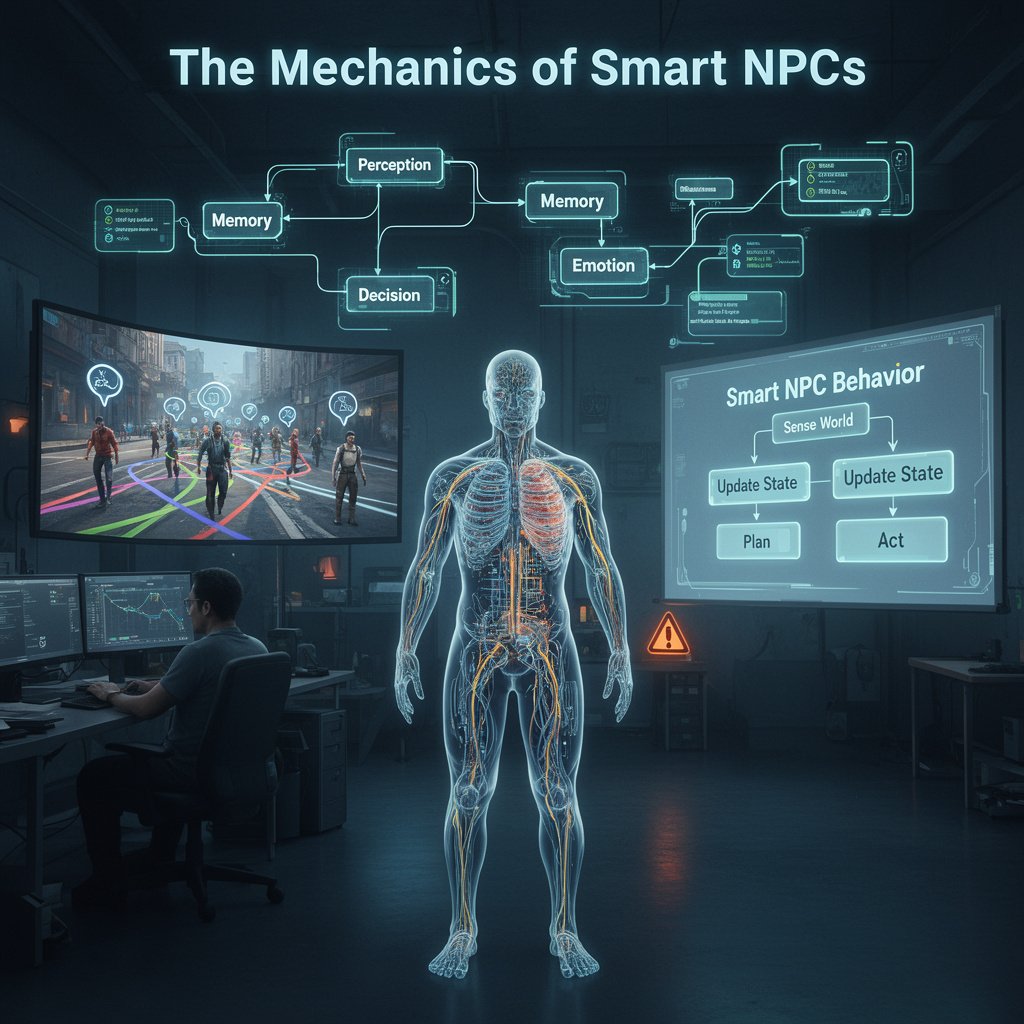

The Mechanics of “Smart” NPCs

So, how does this actually work? It’s not magic; it’s context management.

When you speak to an AI character via text or voice input (using speech-to-text), the game engine sends your input to a model. But it doesn’t just send your words. It wraps your input in a “System Prompt” that defines who the character is.

For example, the prompt might look like this:

“You are Eldric, a grumpy blacksmith. You distrust magic users. You are currently working on a sword. Keep answers short and gruff.”

If you ask Eldric for a sword, he won’t just say “Here is a sword.” He might say, “Fine steel, no enchantments. Don’t want any of that wizard nonsense in my forge.”

The real breakthrough recently has been long term memory vectors. Early iterations of this tech were like goldfish; they’d forget your name five minutes later. Now, developers are using vector databases to store summaries of previous interactions. If you insulted that blacksmith three weeks ago in-game, he remembers. He might charge you double today. That persistence of memory creates a relationship loop that scripted trees simply cannot replicate without millions of lines of manual writing.

The “Soulless” Problem: Quantity vs. Quality

However, I need to pump the brakes on the utopian vision. As much as the tech impresses me, it currently suffers from what I call the “Wikipedia Problem.”

AI characters are fantastic at generating information, but they often struggle with subtext, wit, and pacing. In a recent tech demo I analyzed, an AI detective solved a crime by simply explaining the clues in a flat, monotone paragraph. It lacked drama. It lacked the “human touch” of a writer holding back information to build suspense.

There is a distinct difference between content and story. Generative AI can create infinite content endless quests, infinite dialogue but that doesn’t mean it’s good. We saw a glimpse of this fatigue with the procedurally generated planets in Starfield. Just because you can go anywhere doesn’t mean there’s anything interesting to find.

If every NPC wants to have a twenty-minute philosophical debate with the player, the game’s pacing grinds to a halt. Good game writing is often about brevity, and LLMs are notoriously chatty.

The Ethical Minefield: Voice and Labor

We cannot discuss AI characters without addressing the elephant in the room: the human cost. This is the area where my enthusiasm turns into caution.

Dynamic dialogue needs dynamic voice acting. You can’t record lines for text that hasn’t been written yet. This relies on Neural Voice Synthesis AI that clones a voice to speak new words in real time.

This raises massive ethical and legal questions regarding voice actors. If a studio pays an actor for a session and then trains an AI on their voice to generate infinite lines forever, has the actor been fairly compensated? The answer, historically, has been “no.” The recent strikes within the industry highlight this exact fear.

For AI characters to be accepted by the gaming community, studios must adopt an ethical framework. This means licensing voices for specific durations, paying royalties on AI usage, and ensuring that “digital doubles” aren’t used to bypass union labor. Authenticity isn’t just about the software; it’s about the people behind the art.

The Future: A Hybrid Model

Where is this going? I don’t believe AI will replace narrative designers. Instead, we are heading toward a hybrid “Director” model.

In this future, the main quest the emotional core of the game will still be handcrafted by human writers and performed by human actors to ensure emotional resonance. You want that The Last of Us quality for the big moments.

However, the AI will populate the margins. The shopkeepers, the random pedestrians, the enemy grunts these will be AI-driven. Imagine a stealth game where the guards don’t just walk in circles but actually communicate realistically, coordinating a search pattern based on where they think you went, yelling instructions that make sense in context.

The goal isn’t to simulate a person perfectly. The goal is to sustain the suspension of disbelief. We are moving from a world where games are static paintings to a world where they are improv theater. The actors might occasionally fluff a line or say something weird, but the feeling of being in a reactive, living world is worth the occasional glitch.

The vending machine is broken, and what’s replacing it is messy, loud, and unpredictable. And I can’t wait to play it.

Frequently Asked Questions

Do AI characters require an internet connection to work?

Currently, most high-end generative characters (like those using GPT-4 or Inworld) require a cloud connection because the processing power needed is too high for consumer PCs. However, “Edge AI” and smaller local models (SLMs) are being developed to run directly on your hardware, which will eventually allow for offline play.

Will AI replace human game writers?

Unlikely. AI is a tool for scaling content, not replacing intent. While AI can handle “bark” dialogue (random chatter) or radiant quests, human writers are still essential for structuring the narrative arc, emotional beats, and complex character development that requires empathy and nuance.

Can AI NPCs go “rogue” and ruin the game story?

Developers use “guardrails” to prevent this. These are invisible rules that stop the AI from saying offensive things or breaking the game’s lore (e.g., preventing a medieval knight from talking about iPhones). However, hallucinations (making up false facts) are still a technical challenge developers are refining.

How does this affect game performance (FPS)?

Running a neural network alongside a physics and rendering engine is taxing. Currently, the “inference” (thinking time) creates a slight delay latency between you speaking and the character replying. Reducing this latency is the biggest technical hurdle for real-time action games.

Are there games playing with this tech right now?

Yes. While major AAA titles are still in development, games like Vaudeville (an AI detective game), tech demos like NVIDIA’s Covert Protocol, and extensive mods for Skyrim and Mount & Blade II: Bannerlord are currently showcasing this technology.