The first time I saw neural rendering in action, I genuinely couldn’t tell if I was looking at a photograph or a computer generated image. It was during a tech demonstration at a graphics conference in 2022. The presenter showed a series of forest scenes dappled sunlight filtering through leaves, moisture on bark, subtle atmospheric haze. When he revealed they were entirely synthetic, half the room audibly gasped.

That moment crystallized something I’d been watching develop for years. We’ve crossed into an era where distinguishing real from rendered requires careful examination. And the techniques making this possible are reshaping entertainment, design, and visual communication.

What Realism Enhancement Actually Means

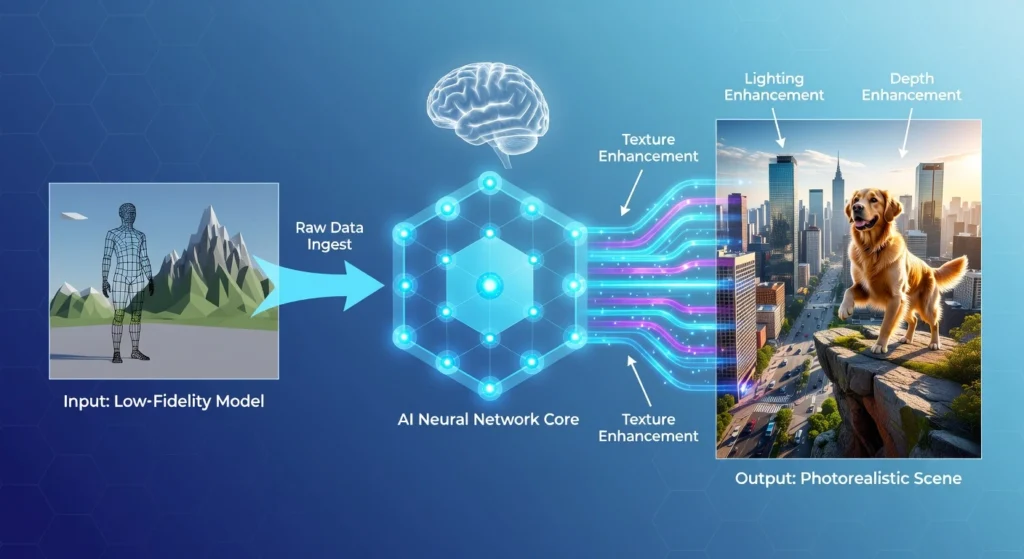

Let’s start with some clarity. When we talk about AI realism enhancement, we’re discussing a collection of techniques that use machine learning to improve visual, audio, and behavioral fidelity in digital content. These aren’t traditional rendering improvements achieved through more computational brute force. They’re intelligent systems that understand what realism looks like and work to achieve it efficiently.

The applications span gaming, film production, virtual reality, architectural visualization, product design, and increasingly, real time communication. Anywhere humans interact with digital imagery, these techniques are finding homes.

Visual Enhancement: The Most Visible Revolution

The most dramatic advances have occurred in visual enhancement, and several techniques deserve attention.

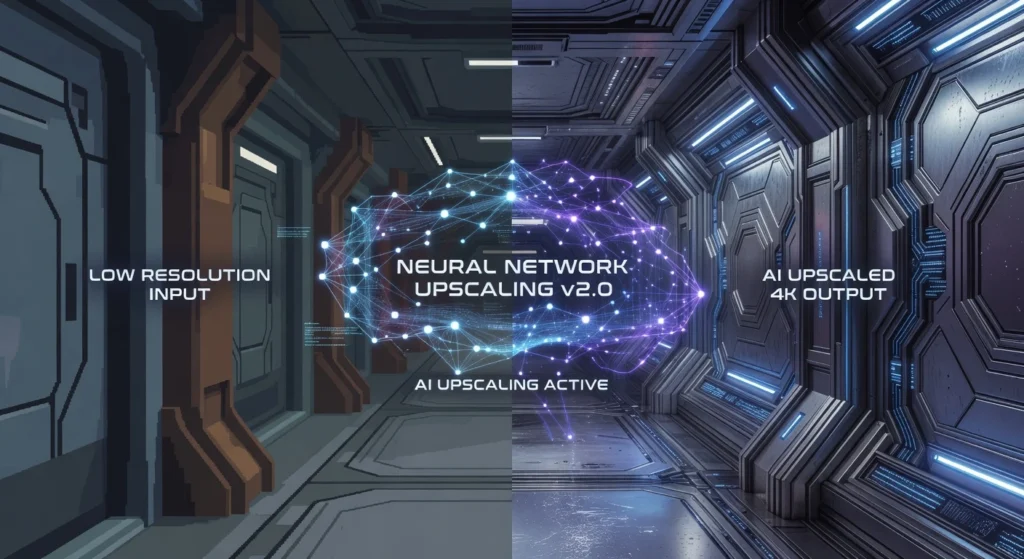

Intelligent Upscaling

NVIDIA’s Deep Learning Super Sampling (DLSS) changed everything when it launched. The concept seems almost paradoxical render images at lower resolutions, then use trained neural networks to reconstruct them at higher resolutions with quality that often exceeds native rendering.

I’ve tested these systems extensively across various games and applications. The results genuinely impress. DLSS 3.5 and AMD’s competing FSR technology can take a 1080p render and produce 4K output that looks sharper than many native 4K implementations. The networks have learned what detail should exist in high-resolution images and can intelligently reconstruct it.

For users, this means smoother framerates without sacrificing visual quality. For developers, it means ambitious visual designs become achievable on mainstream hardware.

Ray Tracing Denoising

Here’s something most users don’t realize. Real time ray tracing produces incredibly noisy images. We’re shooting relatively few rays per pixel compared to offline rendering, and the raw output looks like static on an old television.

Neural denoisers transform that noise into clean, coherent imagery. These systems understand what light should look like, how reflections behave, how shadows should fall. They don’t just blur the noise away they intelligently reconstruct the image based on learned understanding of physical light behavior.

I remember comparing ray traced reflections before and after denoising during a development project. The raw traced image was unusable. After the denoiser? Photorealistic water reflections that responded correctly to every light source in the scene.

Neural Texture Synthesis

Creating realistic textures traditionally required either photographing real world surfaces or painstaking manual creation. Neural texture synthesis changes this equation. Systems can now generate tileable, realistic textures from minimal input sometimes just a rough sketch or a few reference samples.

More impressively, these techniques can enhance existing low-resolution textures. Machine learning models trained on millions of real world surface images can intelligently add detail that wasn’t present in the original, inferring what grain patterns, surface irregularities, and micro details should exist.

Character Realism: The Uncanny Valley Bridge

Perhaps nowhere is realism enhancement more challenging or more impactful than in digital humans.

Facial Animation Enhancement

Epic Games Meta Human technology demonstrates current capabilities remarkably well. The system generates photorealistic digital humans, but more importantly, it handles the subtle animation details that typically break immersion. Micro expressions, skin deformation, eye moisture, subsurface light scattering through skin all handled through techniques that understand how real faces behave.

I’ve watched sessions where animators worked with these systems, and the workflow transformation is substantial. What once required teams of specialists over weeks now happens in hours. The neural systems handle the thousands of small details that make faces feel alive.

Motion Enhancement

Captured motion data rarely translates directly to convincing animation. Marker occlusion creates gaps. Different body proportions between actors and characters create artifacts. Traditional cleanup required extensive manual work.

Modern neural approaches analyze motion data, understand biomechanical constraints, and intelligently fill gaps or adapt movements to different body types. Some systems can even generate realistic secondary motions the way clothing moves, how hair responds to head turns from primary motion data alone.

Audio Realism: The Overlooked Dimension

Visual enhancement gets the headlines, but audio realism techniques deserve recognition. Neural audio systems now generate realistic environmental acoustics, enhance recorded dialogue, and create convincing spatial audio from stereo sources.

I’ve worked with audio designers who use these tools for game development. One described neural reverb modeling as “finally giving us physically accurate room acoustics without killing our CPU budget.” The systems understand how sound behaves in spaces and can generate realistic acoustic responses in real-time.

Real World Applications

These techniques converge powerfully in several industries.

Automotive designers visualize vehicles in photorealistic environments before physical prototypes exist. Architects walk clients through buildings that don’t exist yet but look indistinguishable from photography. Film productions reduce costs by enhancing practical footage with neural techniques rather than expensive reshoots.

Virtual production stages like those used for The Mandalorian leverage real-time rendering with AI enhancement to create believable environments that actors can actually see and interact with during filming.

Limitations Worth Acknowledging

These technologies aren’t perfect. Temporal stability remains challenging flickering and artifacts can occur between frames. Unusual content that differs from training data produces poor results. Computational requirements, while reduced from brute force alternatives, remain significant.

And there are legitimate concerns about misuse. Technology that makes synthetic imagery indistinguishable from reality raises obvious questions about deception and misinformation.

Looking Forward

The trajectory points toward real time photorealism becoming routine rather than exceptional. As these techniques mature and hardware evolves, the gap between real and rendered continues narrowing.

What excites me most is democratization. Techniques that once required massive studio budgets are becoming accessible to independent creators. That shift will produce creative applications we haven’t imagined yet.

Frequently Asked Questions

What are AI realism enhancement techniques?

Machine learning methods that improve visual, audio, and behavioral fidelity in digital content by intelligently understanding and recreating realistic characteristics.

How does DLSS improve image quality?

It renders at lower resolution, then uses neural networks trained on high quality images to reconstruct detail, often exceeding native rendering quality.

Do these techniques work in real-time?

Yes, many implementations run in real time on consumer hardware, enabling applications in gaming, virtual reality, and live production.

What industries use realism enhancement?

Gaming, film production, architectural visualization, automotive design, virtual reality, product design, and broadcast production all utilize these techniques.

Are there limitations to current technology?

Yes, including temporal stability issues, artifacts with unusual content, computational demands, and challenges with extreme edge cases.

Does this technology raise ethical concerns?

Absolutely. Photorealistic synthetic imagery raises legitimate concerns about deepfakes, misinformation, and digital deception that the industry continues addressing.