I’ve watched cheating evolve in online games for nearly two decades now, from simple aimbots in early Counter Strike servers to sophisticated hardware level hacks that can fool even the most advanced detection systems. It’s an arms race that never really ends. But something shifted around 2020 when major developers started seriously implementing AI powered anti cheat systems, and honestly, the results have been both impressive and concerning in equal measure.

Beyond Traditional Signatures

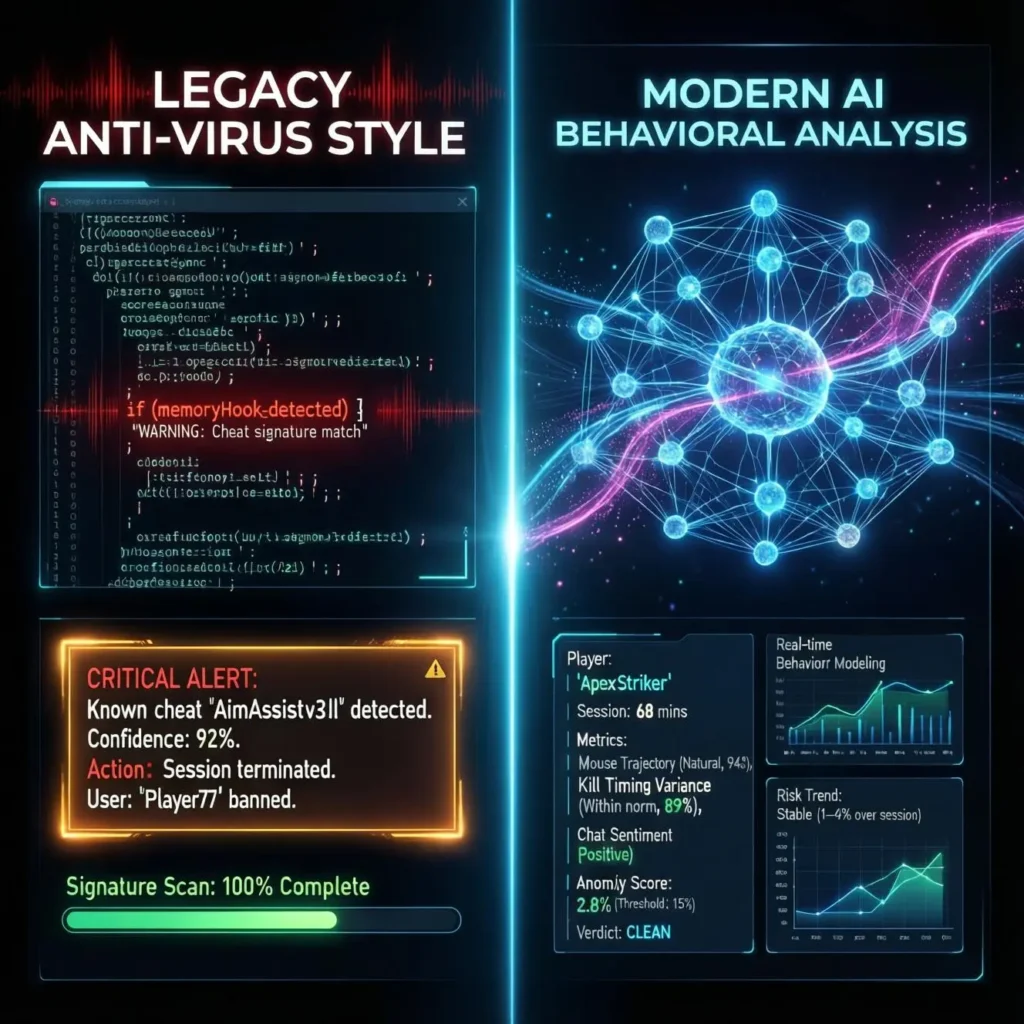

Traditional anti cheat software worked like antivirus programs scanning for known cheat signatures, blocking suspicious processes, and checking memory for modifications. Companies like Easy Anti Cheat and BattlEye built their reputations on these methods. The problem? Cheat developers caught on fast. They’d update their code, change signatures, and within hours of a detection wave, new undetected versions would hit the market.

AI based anti cheat fundamentally changes this cat and mouse game. Instead of looking for specific cheat signatures, machine learning models analyze player behavior patterns. Think about it: a human player, even a professional one, has natural inconsistencies. Your reaction time varies by milliseconds. You miss shots. You check corners somewhat randomly based on game sense and audio cues. Cheating software, particularly aimbots and wallhacks, creates statistically abnormal patterns that machine learning can identify.

How It Actually Works

I spoke with several developers who work in this space (under NDA, naturally), and the basic framework goes something like this: The system collects thousands of data points during gameplay mouse movements, reaction times, viewing angles, shooting accuracy under various conditions, movement patterns, even how players respond to information they shouldn’t have access to.

Take VALORANT’s Vanguard system as a concrete example. Riot Games implemented both kernel level traditional anti cheat AND behavioral analysis powered by machine learning. The behavioral component tracks things like: Do you consistently pre fire angles where enemies happen to be? Is your crosshair placement suspiciously perfect before encounters? Do your flick shots have mechanical consistency that exceeds human capability?

One fascinating case from 2022 involved a player who got flagged by the system despite not using traditional cheats. Turns out he was using a hardware device that reduced mouse input delay to inhuman levels, combined with sound based ESP (a radar hack using 3D audio positioning). Traditional anti cheat wouldn’t have caught this no software modification occurred. The AI flagged the statistically impossible consistency in his pre aim timing.

Real World Implementations

Activision’s RICOCHET for Call of Duty: Warzone represents one of the most aggressive implementations. Launched in late 2021, it combines kernel level drivers with machine learning that analyzes everything from ballistics data to movement mechanics. They’ve even implemented damage shields for legitimate players when the system detects a cheater, it can make legitimate players temporarily invincible against them while collecting more data to confirm the ban.

CS2 (Counter Strike 2) uses what Valve calls “VacNet,” a deep learning system that reviews Overwatch cases (player reported demos). It’s trained on thousands of reviewed cases where human investigators confirmed cheating. VacNet now flags suspects with scary accuracy, sending only the highest confidence cases to human reviewers and automatically banning the most blatant offenders.

The results speak for themselves. Warzone saw cheating complaints drop by roughly 60% in the months following RICOCHET’s full deployment, according to community sentiment tracking (official numbers are, predictably, kept close to the chest).

The Privacy Question Nobody Likes Talking About

Here’s where things get uncomfortable. Effective AI anti cheat requires data lots of it. Vanguard runs at Windows kernel level from system boot, which means it has deep access to your computer. It’s monitoring processes, hardware configurations, and system behaviors constantly.

I understand why developers do this. Cheats have moved to kernel level and even hardware based solutions. To detect them, anti cheat must operate at the same privilege level. But we’re essentially installing corporate rootkits on our gaming PCs, trusting companies with extraordinary access to our systems.

The security researcher community has raised legitimate concerns. Any kernel level software becomes a potential attack vector if compromised. In 2021, a vulnerability in Genshin Impact’s kernel level anti cheat was discovered and could theoretically be exploited for malicious purposes. These aren’t theoretical risks they’re real trade offs between competitive integrity and personal security.

False Positives and the Human Cost

No system is perfect. I’ve personally known two competitive players who faced temporary bans from AI anti cheat systems. Both were eventually reversed, but the damage was real missed tournament opportunities, reputation hits within their communities, and the frustrating experience of proving their innocence.

The challenge with machine learning is that it works on probabilities, not certainties. A highly skilled player having an exceptional game can sometimes trigger the same statistical patterns as someone using low FOV (field of view) aimbot. This is why most serious implementations use AI for flagging and investigation, with human review before permanent bans.

Looking Forward

The next frontier seems to be real time intervention rather than just detection. Imagine systems that can identify and neutralize cheats mid match without disrupting legitimate players we’re seeing early versions already. AI could potentially modify game parameters for suspected cheaters: slightly delaying their inputs, reducing their damage output, or subtly altering their game state to confirm suspicious behavior.

There’s also growing interest in federated learning approaches, where anti cheat AI could be trained across multiple games without sharing raw player data, improving detection while potentially addressing privacy concerns.

The Bottom Line

AI anti cheat technology works that’s undeniable. Games implementing sophisticated machine learning systems have seen measurable decreases in cheating prevalence. But effectiveness comes with legitimate costs to privacy, system security, and occasionally to innocent players caught in false positives.

As someone who’s watched too many games become unplayable due to rampant cheating (looking at you, pre RICOCHET Warzone), I’d argue the trade off is generally worthwhile. But that doesn’t mean we shouldn’t demand transparency, accountability, and ongoing improvements in accuracy from developers deploying these systems.

The war against cheaters will never truly end, but AI has given developers better weapons than they’ve ever had before.

FAQs

What is AI anti cheat in gaming?

AI anti cheat uses machine learning to analyze player behavior patterns and statistics to identify cheating, rather than just scanning for known cheat software signatures.

Is AI anti cheat better than traditional methods?

Generally yes, because it can detect new and unknown cheats based on behavioral anomalies rather than requiring specific signatures. It’s most effective when combined with traditional methods.

Which games use AI anti cheat?

Major implementations include VALORANT (Vanguard), Call of Duty (RICOCHET), Counter Strike 2 (VacNet), and Rainbow Six Siege, among others.

Can AI anti cheat make mistakes?

Yes, false positives can occur, though good systems use AI for flagging with human review before permanent bans. Highly skilled players occasionally trigger suspicious patterns.

Is kernel level anti cheat safe?

It involves trade offs. While generally safe from reputable developers, any kernel level software presents potential security risks if compromised and requires significant system trust.

Does AI anti cheat invade privacy?

It requires collecting extensive gameplay data and system information. The privacy implications depend on implementation specifics and individual comfort levels with data collection.