I’ve spent the better part of fifteen years watching game AI evolve from simple state machines to something genuinely remarkable. Back when I started in game development, enemy NPCs followed predetermined patrol routes, and we celebrated when they could navigate around a corner without getting stuck. Now? We’re building systems that learn, adapt, and sometimes surprise even their creators.

The shift happening right now in game AI architecture isn’t just incremental improvement. It’s a fundamental rethinking of how intelligent agents operate within virtual worlds.

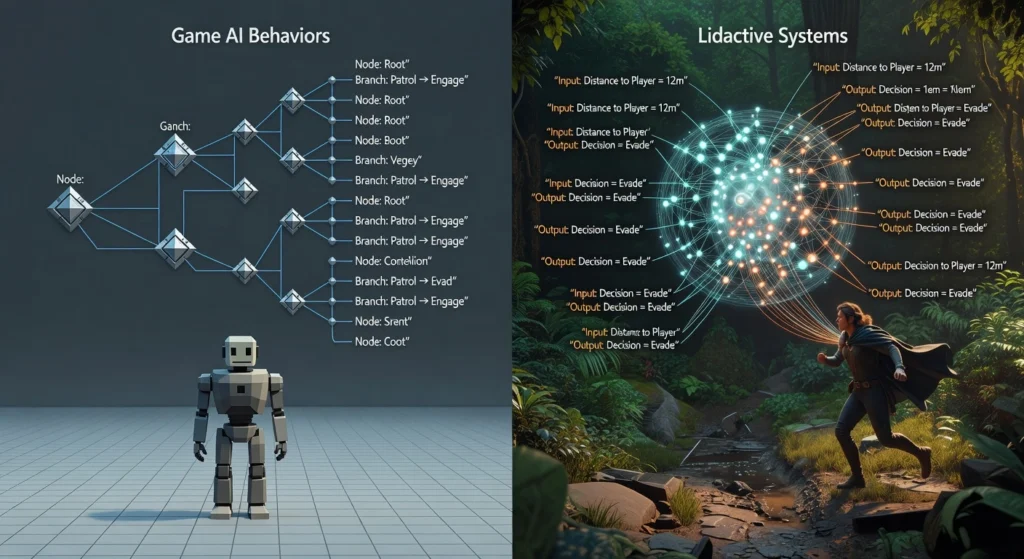

Beyond Traditional Behavior Trees

For years, behavior trees dominated game AI. They’re elegant, predictable, and relatively easy to debug three qualities any game developer appreciates at 2 AM before a deadline. But they have a ceiling. Eventually, you hit a point where adding more branches creates exponential complexity without meaningful improvements to player experience.

What’s replacing them isn’t a single architecture but a toolkit of approaches that developers mix and match based on specific needs.

Utility AI systems have gained serious traction over the past few years. Instead of rigid if then logic, these systems score potential actions based on multiple weighted factors. An NPC might consider health status, proximity to threats, available resources, and even its personality traits before deciding whether to fight, flee, or negotiate. The results feel more organic because they actually are decisions emerge from contextual evaluation rather than predetermined scripts.

I worked on a project last year where we implemented utility AI for companion characters. The difference was night and day. Players stopped complaining that companions felt robotic. Instead, they started forming genuine attachments because the characters responded to situations in believably human ways.

Neural Networks Enter the Arena

Machine learning architectures have moved from research papers into production games. Not everywhere yet, but the momentum is undeniable.

Reinforcement learning produces some of the most impressive results we’re seeing. Training agents through repeated play sessions allows them to discover strategies that human designers never anticipated. OpenAI Five demonstrated this years ago with Dota 2, but now smaller studios are implementing similar approaches for specialized tasks.

The challenge? Training time and computational cost remain significant barriers. Most studios can’t afford to run training simulations for weeks on server clusters. That’s why hybrid approaches have become popular using neural networks for specific decision layers while relying on traditional systems for baseline behaviors.

Imitation learning offers an interesting middle ground. Rather than training agents from scratch, you capture expert human play and teach networks to replicate those patterns. It’s faster to implement and produces immediately recognizable competent behavior. Some racing games already use this to create AI opponents that drive like actual players rather than following optimal but inhuman racing lines.

Procedural Intelligence AI That Creates

Procedural content generation powered by neural architectures represents perhaps the most exciting frontier. We’ve had procedural generation for decades roguelikes proved its value ages ago. But generative AI systems create with a qualitative difference that’s hard to overstate.

Modern systems don’t just randomize parameters within designer defined boundaries. They understand context, style, and narrative coherence. A dungeon generated by newer architectures considers pacing, visual variety, and difficulty progression in ways that feel designed rather than generated.

I recently played an indie title that generated quest dialogue using a constrained language model. The conversations weren’t perfect, but they felt remarkably fresh after dozens of hours. Every NPC had something slightly different to say, rooted in their role but not copied from a template. That’s a game-changer for replayability.

The Director Architecture Evolution

Left 4 Dead’s AI Director showed us years ago that meta level AI managing player experience could transform gameplay. That concept has evolved considerably.

Modern adaptive AI directors don’t just spawn enemies based on stress metrics. They analyze play patterns, predict player skill trajectories, and adjust multiple systems simultaneously enemy intelligence, resource availability, environmental hazards, even narrative pacing in some implementations.

What makes current architectures different is their predictive capability. Rather than reacting to what happened, they anticipate what’s about to happen and prepare appropriate responses. The result is difficulty that feels challenging without becoming frustrating, a balance human designers struggle to achieve through static tuning.

Real Time Learning The Holy Grail

Perhaps the most ambitious emerging architecture involves AI that learns during gameplay itself. Not between sessions or during development, but actively adapting to individual players in real time.

This remains technically demanding. Training neural networks requires significant computation, and doing it without impacting frame rates seemed impossible until recently. But optimization breakthroughs and dedicated neural processing units in modern hardware have opened new possibilities.

Some fighting games now feature training mode AI that learns player tendencies and develops counter-strategies. Racing games adapt opponent aggression based on player behavior patterns. These implementations remain limited in scope, but they point toward a future where every player faces uniquely tailored challenges.

Challenges Nobody’s Solved Yet

Honesty requires acknowledging limitations. Emerging AI architectures introduce problems we’re still learning to address.

Explainability remains difficult. When a neural network makes decisions, understanding why often proves impossible. This makes debugging nightmarish and can create situations where AI behaves inappropriately without clear pathways to correction.

Consistency is another struggle. Players expect predictable game worlds even when they want surprising AI. Balancing emergent behavior with reliable experience requires careful constraint systems that sometimes undermine the very adaptability these architectures promise.

And then there’s the uncanny valley of intelligence. AI that’s almost human like but not quite can feel more disturbing than obviously artificial systems. Finding the right level of sophistication for specific game contexts requires extensive testing and iteration.

Where We’re Heading

The trajectory seems clear even if the destination remains uncertain. Game AI is moving toward systems that feel less like programmed responses and more like genuine intelligence operating within fictional worlds.

This doesn’t mean traditional approaches disappear. Many games don’t need sophisticated AI. A platformer enemy that walks back and forth works perfectly fine. But for games attempting immersion, narrative depth, or competitive challenge, emerging architectures offer tools we didn’t have five years ago.

The studios investing in these technologies now will have significant advantages as player expectations evolve. Those treating AI as an afterthought may find their games feeling dated faster than they anticipated.

Frequently Asked Questions

What is utility AI in games?

Utility AI evaluates multiple factors to score potential actions, selecting behaviors based on contextual priorities rather than rigid decision trees. This creates more naturalistic, adaptive NPC behavior.

Can game AI actually learn during gameplay?

Yes, though implementations remain limited. Some modern games feature AI that adapts to player patterns in real time, particularly in fighting and racing genres.

Why don’t all games use neural network AI?

Computational cost, development complexity, and debugging difficulty make neural networks impractical for many projects. Traditional approaches remain more efficient for simpler AI requirements.

What is an AI Director in games?

An AI Director is a meta level system that monitors player experience and dynamically adjusts game elements enemy spawning, difficulty, resources to maintain optimal engagement.

Will advanced AI replace human game designers?

No. These tools augment human creativity by handling procedural elements while designers focus on vision, narrative, and experience crafting. Human direction remains essential.

How do imitation learning systems work?

They analyze recorded expert gameplay and train neural networks to replicate observed behaviors, producing competent AI without lengthy reinforcement learning training periods.